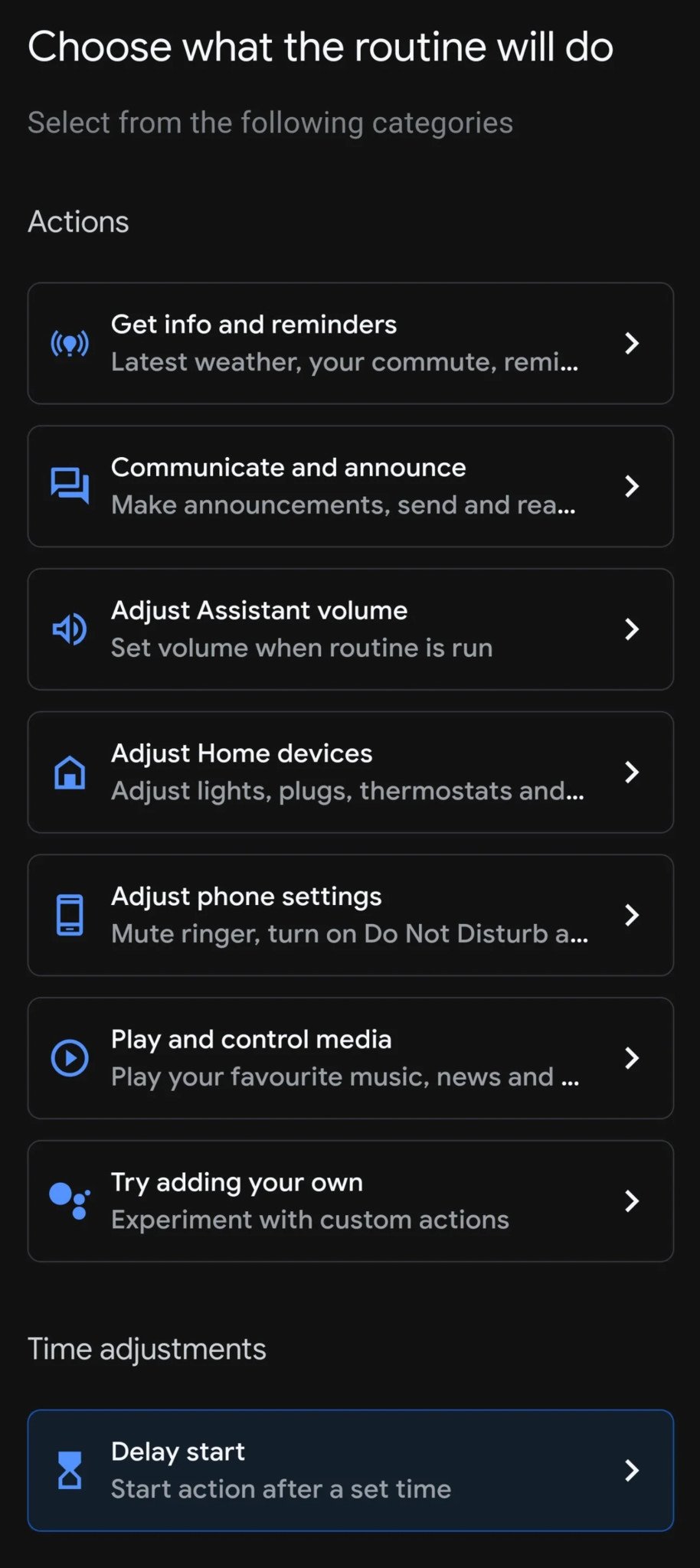

You can adjust when Assistant commands are triggered using Google Home's new time adjustments option.

What you need to know

- A new feature to delay actions in custom Google Assistant routines has been spotted in the Google Home app.

- The new capability lets you set an amount of time before a specific action in your routines starts.

- It is available to some users in the UK for now.

Routines are one of Google Assistant's coolest features as they let you trigger a series of tasks by just saying a specific command, but there's currently no way you can control when those actions start. Now, it looks like Google Home has added a new option to delay your Assistant commands.

A Reddit user (u/Droppedthe_ball) posted a screenshot of a new Google Home app feature that lets you adjust the time for a specific task in your routines to start. The new time adjustments option comes in handy during certain moments when you don't want some of the best Google Home compatible devices such as your bedroom lights to turn on yet or your coffee maker to start brewing until you're ready to take a sip.

However, it appears the new capability applies only to custom routines you've created. The Redditor noted that time adjustments are not visible in any of the pre-programmed Assistant routines like "Good morning" or "Commute". This means the tasks you've added to those routines will be carried out immediately one after another once you say the command phrase.

The new capability appears at the bottom of the routines list with a "delay start" option. When you tap it, you'll be able to set the amount of time before an action starts by using a timer wheel.

At the moment, the feature seems to be available in the Google Home app v2.42.1.14 for some users in the UK. That said, it could arrive for everyone soon.

Source: androidcentral